AGI Alignment Experiments: Foundation vs INSTRUCT, various Agent

Por um escritor misterioso

Descrição

Here’s the companion video: Here’s the GitHub repo with data and code: Here’s the writeup: Recursive Self Referential Reasoning This experiment is meant to demonstrate the concept of “recursive, self-referential reasoning” whereby a Large Language Model (LLM) is given an “agent model” (a natural language defined identity) and its thought process is evaluated in a long-term simulation environment. Here is an example of an agent model. This one tests the Core Objective Function

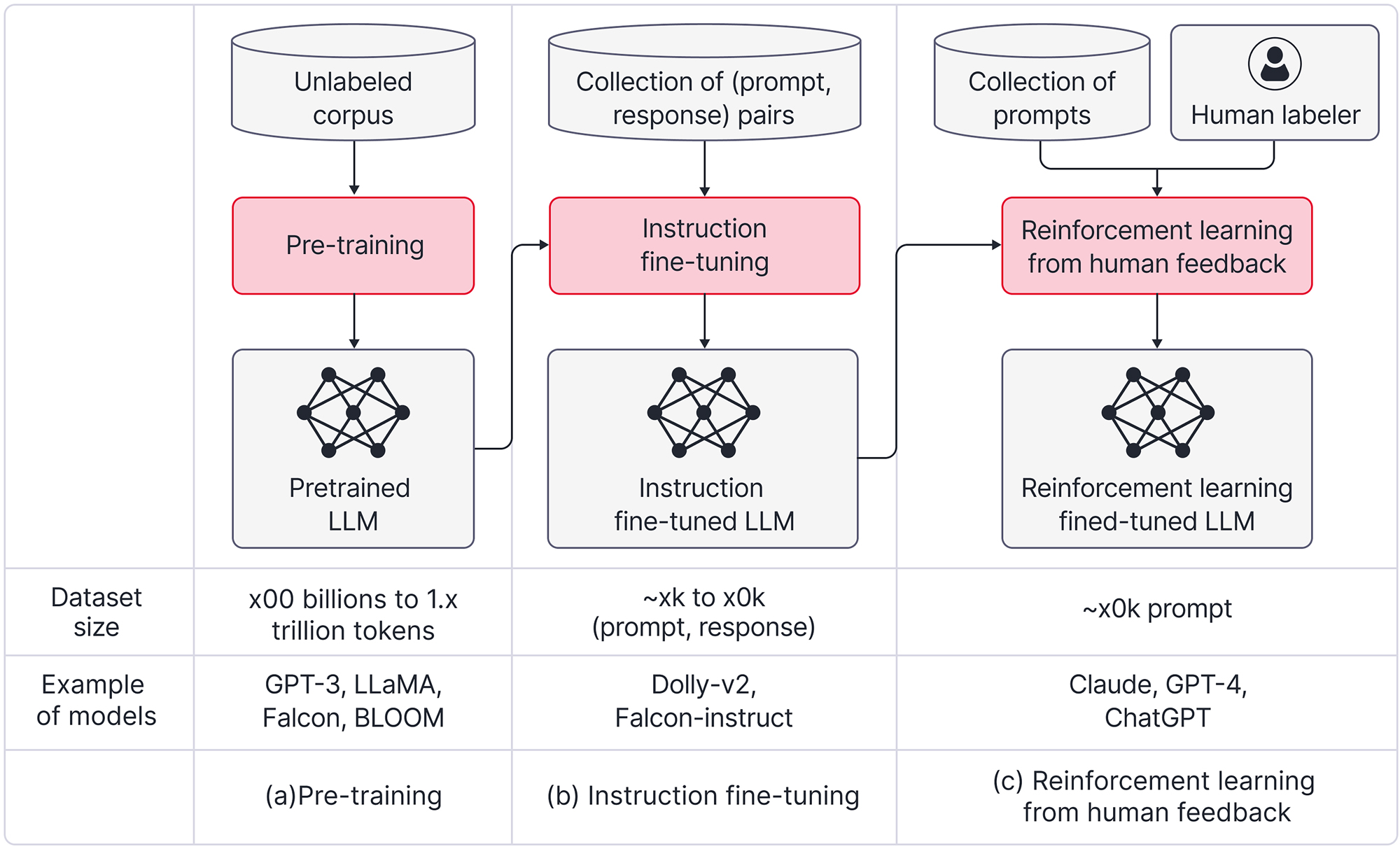

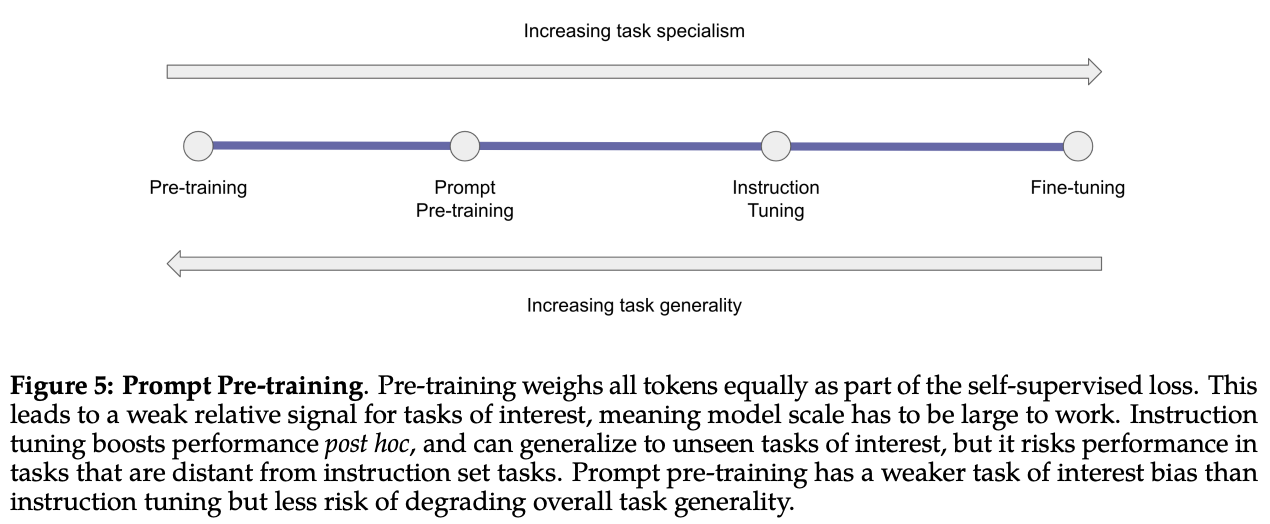

A High-level Overview of Large Language Models - Borealis AI

Survey XII: What Is the Future of Ethical AI Design?, Imagining the Internet

Machines that think like humans: Everything to know about AGI and AI Debate 3

Specialized LLMs: ChatGPT, LaMDA, Galactica, Codex, Sparrow, and More, by Cameron R. Wolfe, Ph.D.

We Don't Know How To Make AGI Safe, by Kyle O'Brien

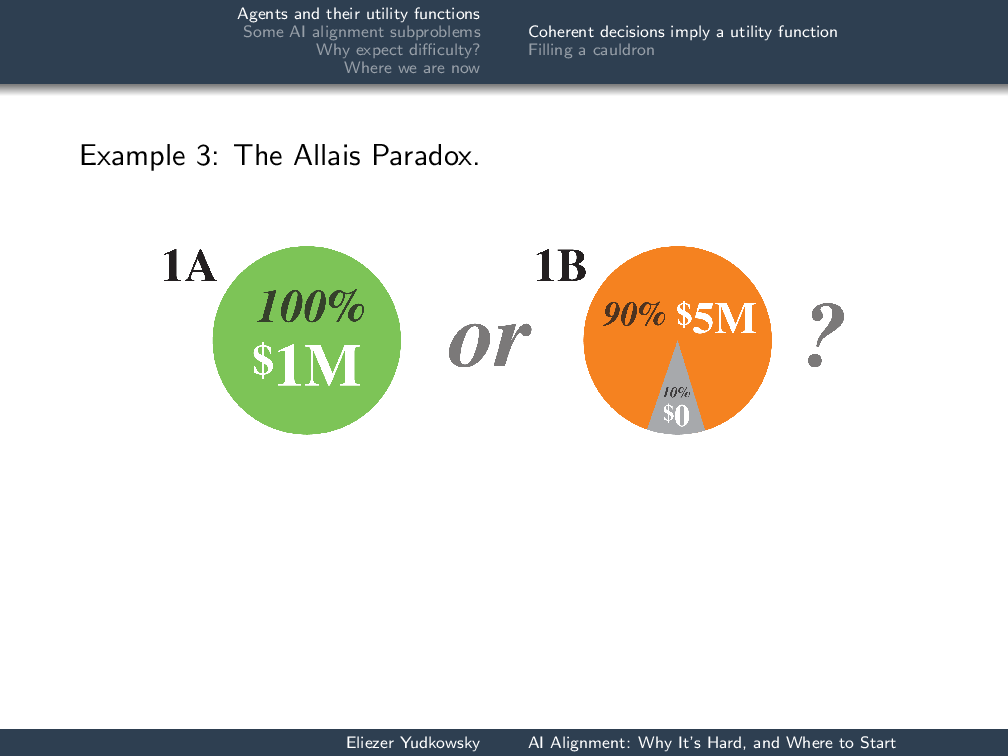

AI Alignment: Why It's Hard, and Where to Start - Machine Intelligence Research Institute

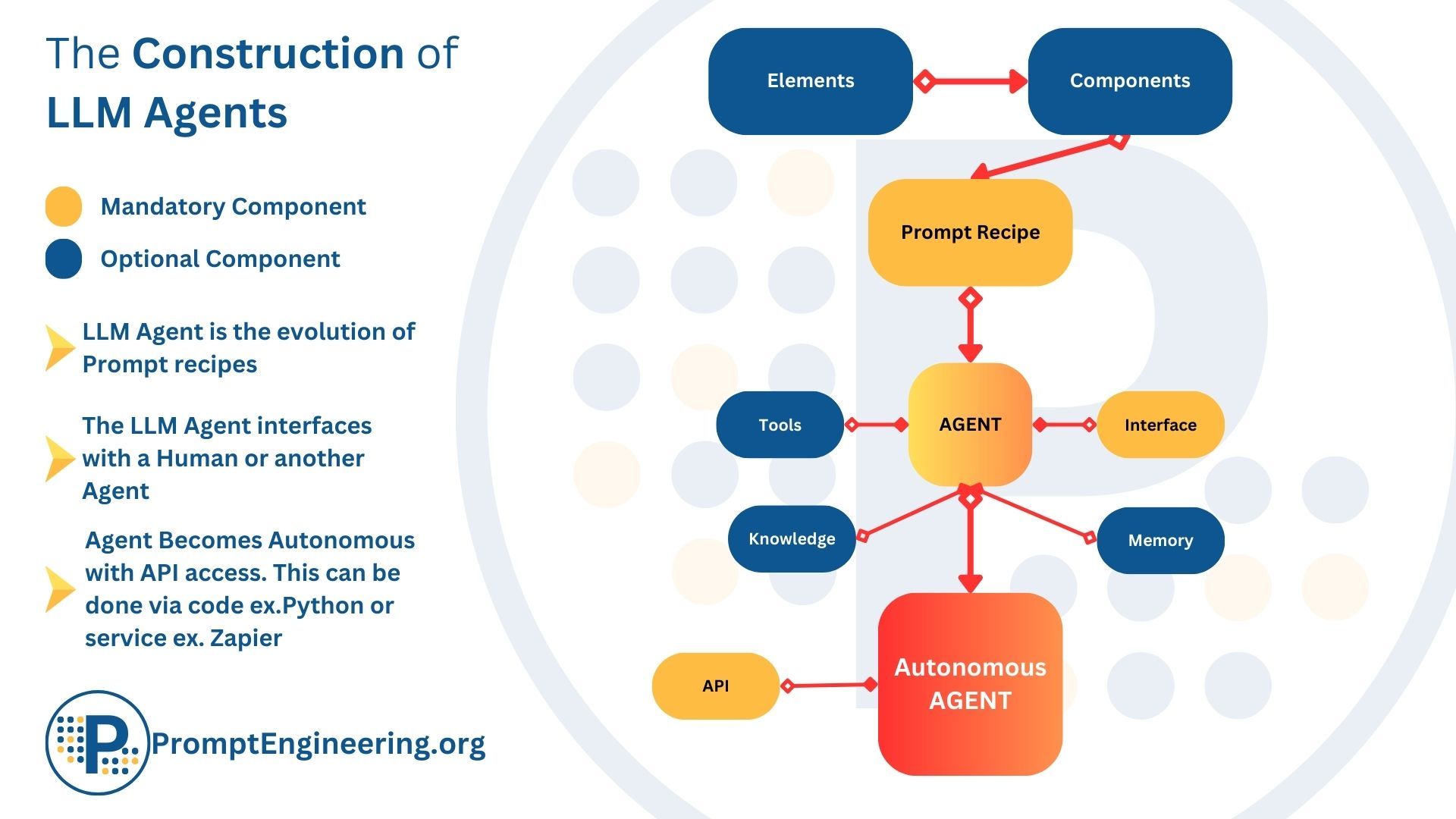

What Are Large Language Model (LLM) Agents and Autonomous Agents

Simulators :: — Moire

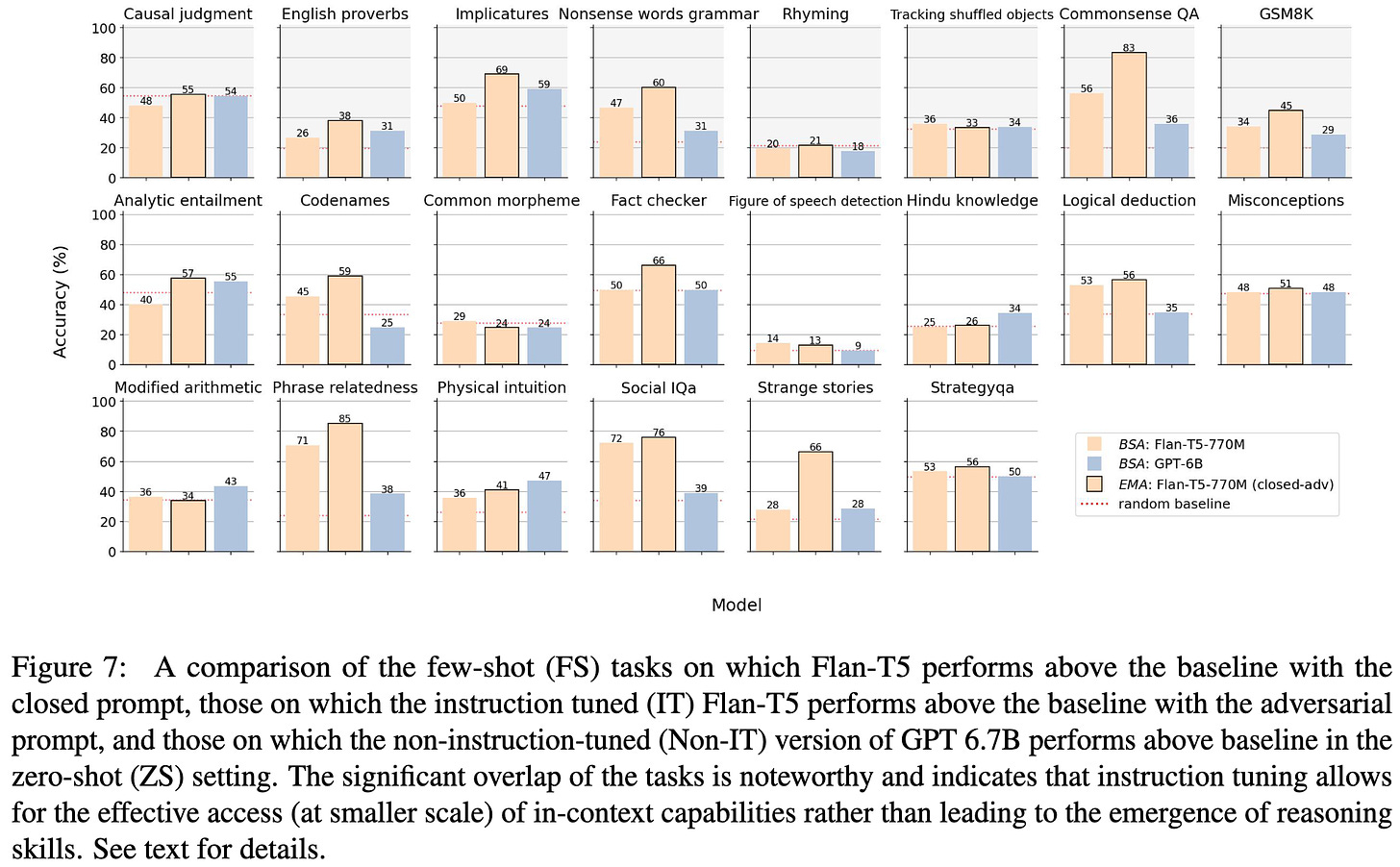

2023-9 arXiv roundup: A bunch of good ML systems and Empirical science papers

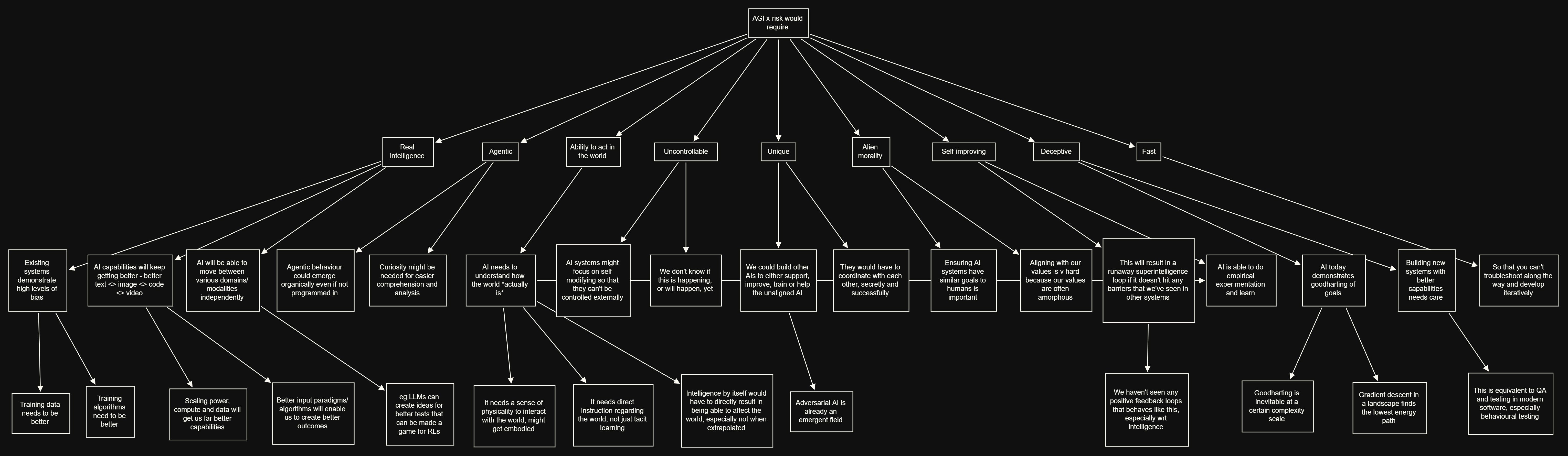

Artificial General Intelligence and how (much) to worry about it

de

por adulto (o preço varia de acordo com o tamanho do grupo)